I have NSX 6.1.2 deployed on vSphere 5.5 and wanted to share the details of a specific bug applicable to this version of NSX that I came across, especially since it doesn’t have a relevant KB article published for it.

After setting up the NSX manager and all the host preparations including VXLAN prep, I had deployed a number of DLR instances, each with multiple internal interfaces and an uplink interface which in tern, was connected to an Edge Gateway instance for external connectivity. And I kept on getting communication issues between certain interfaces of the DLR, where by for example, the internal interfaces connected to vNIC 1 & 2 would communicate with one another (I can ping from a VM on VXLAN 1 attached to the internal interface on vNIC 1 to a VM on VXLAN 2 attached to the internal interface on vNIC 2) but none of them would talk to the internal interface 3, attached to the DLR vNic 3 or even uplink interface on vNic 0 (Cannot ping the Edge gateway). The interfaces that cannot communicate were completely random however and was persistent on multiple DLR instances deployed. All of them had one thing in common which was that no internal interface would talk to the uplink interface IP (of the Edge gateway attached to the other end of the uplink interface).

One symptom of the issue was what was described in this blog post I posed on the VMware communities page, at https://communities.vmware.com/thread/505542

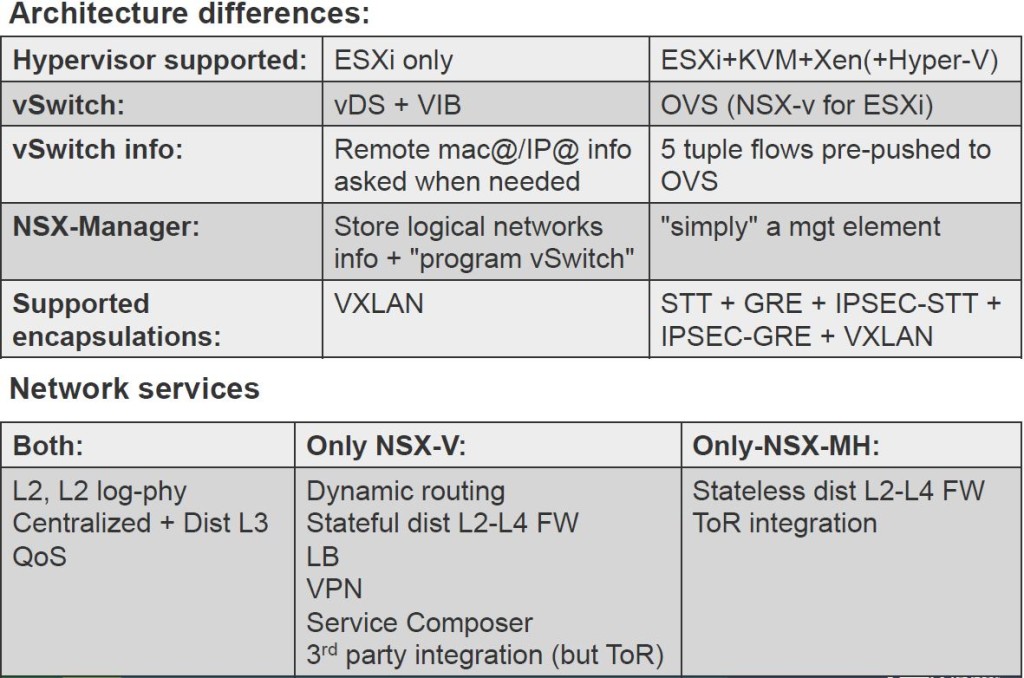

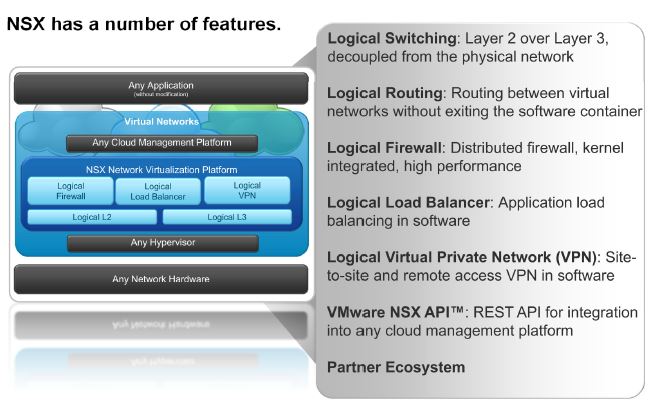

Finally I had to log a call with NSX support at VMware GSS and according to their diagnosis, it turned out to be an inconsistency issue with the netcpa daemon running on the ESXi hosts and its communication with the NSX controllers. (FYI – netcpa gets deployed during the NSX host preparation stage, as a part of the User World Agents and is responsible for communication between DLR and the NSX controllers as well as the VXLAN and NSX controllers- see the diagram here)

During the troubleshooting, it transpired that some details (such as VXLAN details) were out of sync between the hosts and the controllers (different from what was shown as the VXLAN & VNI configuration in the GUI) and the temporary fix was to stop and start the netcpa daemon on each of the hosts in the compute & edge cluster ESXi nodes (commands “/etc/init.d/netcpad stop” followed up by “/etc/init.d/netcpad start” on the ESXi shell as root).

Having analysed the logs thereafter, VMware GSS confirmed that this was indeed an internally known issue with NSX 6.1.2. Their message was “This happens due to a problem in the tcp connection handshake between netcpa and the manager once the last one is rebooted (one of the ACKs is not received by netcpa, and it does not retry the connection). This fix added re-connect for every 5 seconds until the ACK is received“. Unfortunately, there’s no published KB article out (as of now) for this issue which doesn’t help much. expecially if you deploying this in a lab…etc.

This issue has (allegedly) been resolved in NSX version 6.1.3 even thought its not explicitly stated within the release notes as of yet (the support engineer I dealt with mentioned that he’s requested this to be added)

So, if you have similar issues with NSX 6.1.2 (or lower possibly), this may well be the way to go about it.

One thing I did lean during the troubleshooting process (which was probably the most important thing that came out of this whole issue for me, personally) was the understanding of the importance of the net-vdr command, which I should emphasize here though. Currently, there are no other ways to check if the agents running on the ESXi hosts have the correct configuration settings to do with NSX other than looking at it on command line…. I mean, you can force a resync of the appliance configuration or redeploy the appliances themselves using NSX GUI but that doesn’t necessarily update the ESXi agents and was of no help in my case mentioned above.

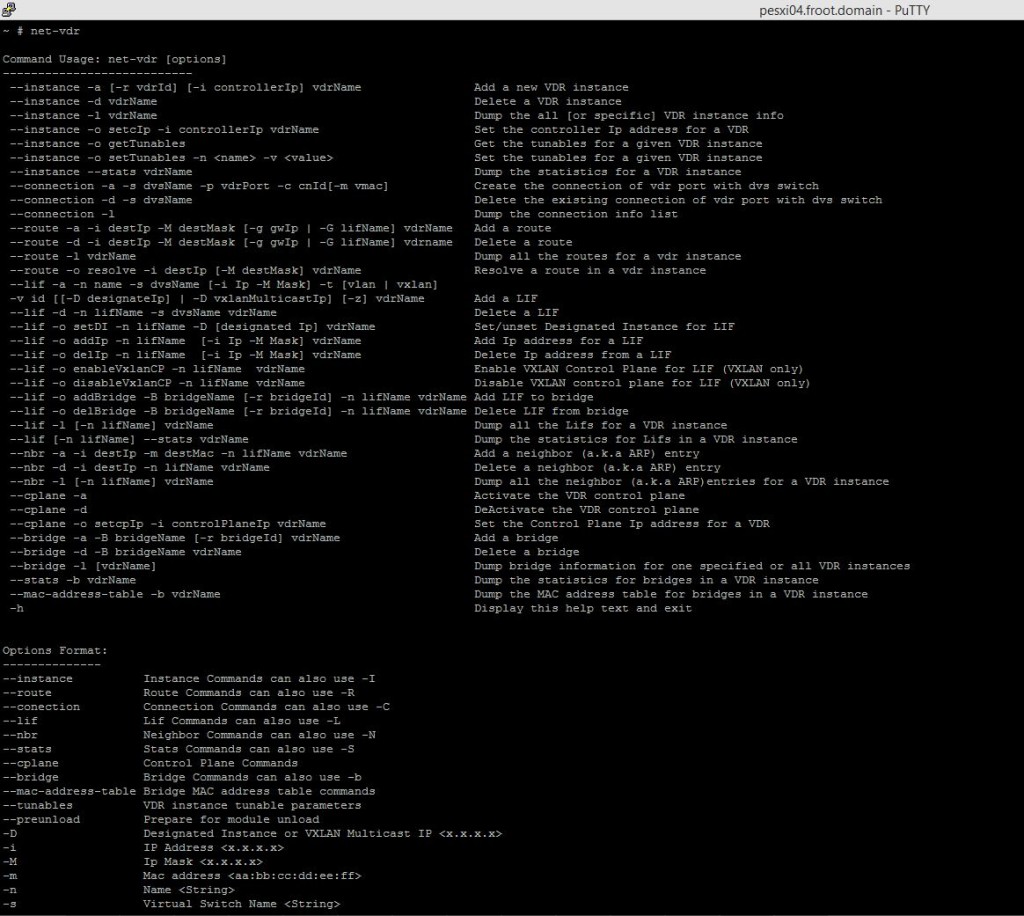

net-vdr command lets you perform a number of useful operations relating to DLR, from basic operations such as adding / deleting a new VDR (Distributed Router) instance, dumpi9ng the instance info, configure DRl settings including changing the controller details, adding / deleting DLR route details, listing all DLR instances, ARP operations such as show, add & delete ARP entries in the DLR & DLR Bridge operations and has turned out to be real handy for me to do various troubleshooting & verification operations regarding DLR settings. Unfortunately, there don’t appear to be much documentation on this command and its use, not on the VMware NSX documentation NOR within the vSphere documentation, at least as of yet, hence why I thought I’d mention it here.

Given below is an output of the commands usage,

So, couple of examples….

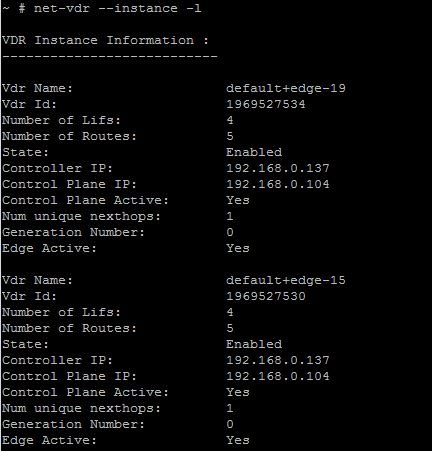

If you want to list all the DLR instances deployed and their internal names, net-vdr –instance -l will list out all the DLR instances as how ESXi sees them.

If you NSX GUI says that you have 4 VNI’s 5000, 5001, 5002 & 5003 defined and you need to check whether if all 4 VNI configurations are also present on the ESXi hosts, net-vdr -L -l default+edge-XX (where XX is the unique number assigned to each of your DLR) will show you all the DLR interface configuration, as how the ESXi host sees it.

If you want to see the ARP information within each DLR, net-vdr –nbr -l default+edge-XX (where XX is the unique number assigned to each DLR) will show you the ARP info.

Hope this would be of some use to those early adaptors of NSX out there….

Cheers

Chan