As a part of the recently concluded Storage Field Day 12 (#SFD12), we traveled to one of the Intel campuses in San Jose to listen to the Intel Storage software team about future of storage from an Intel perspective (You can read all about here). While this was great, just before that session, we were treated to another similarly interesting session by SNIA – The Storage Networking Industry Association and I wanted to brief everyone on what I learnt from them during that session which I thought was very relevant to everyone who has a vested interest in field of IT today.

The presenters were Michael Oros, Executive Director at SNIA along with Mark Carlson who co-chairs the SNIA technical council.

Introduction to SNIA

SNIA is a non-profit organisation that was formed 20 years ago to deal with inter-operability challenges of network storage by various different tech vendors. Today there are over 160 active member organisations (tech vendors) who work together behind closed doors to set standards and improve inter-operability of their often competing tech solutions out in the real world. The alphabetical list of all SNIA members are available here and the list include key network and storage vendors such as Cisco, Broadcom, Brocade, Dell, Hitachi, HPe, IBM, Intel, Microsoft, NetApp, Samsung & VMware. Effectively, anyone using any and most of the enterprise datacenter technologies have likely benefited from SNIA defined industry standards and inter-operability

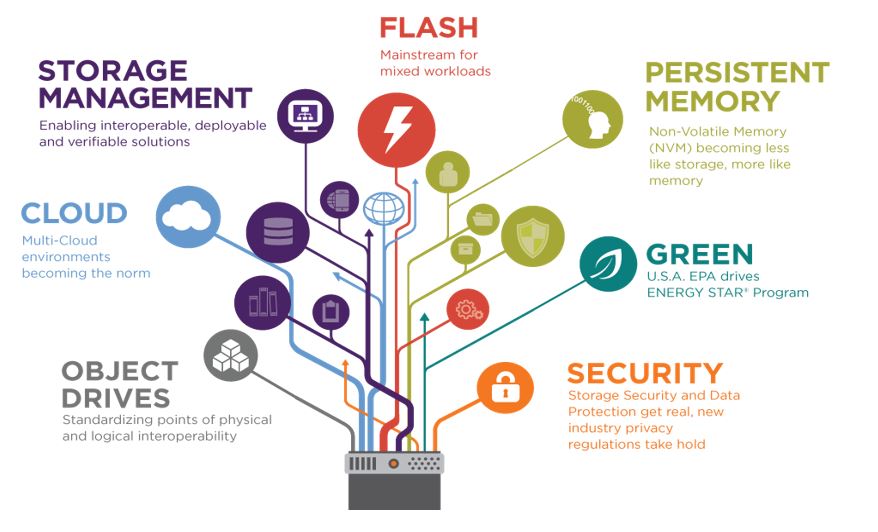

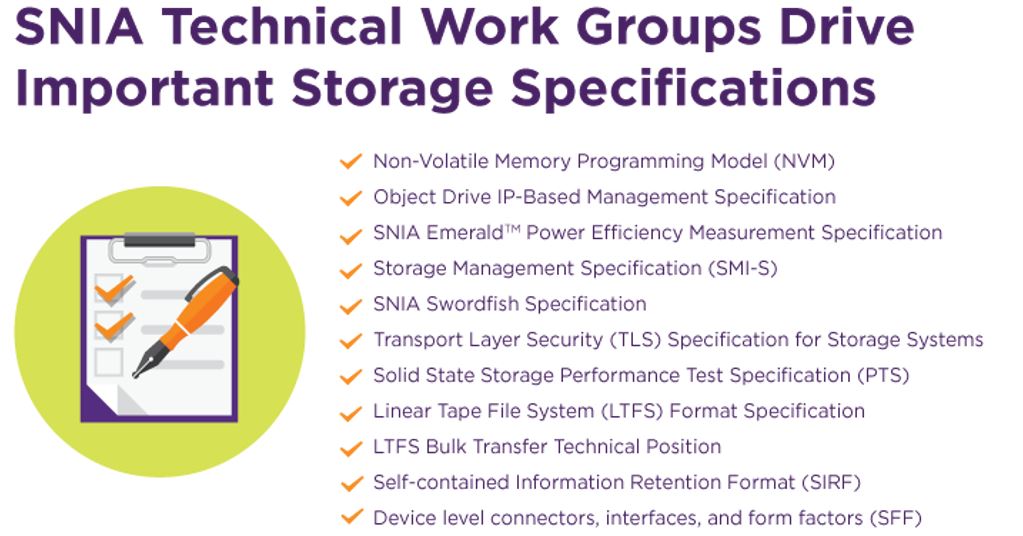

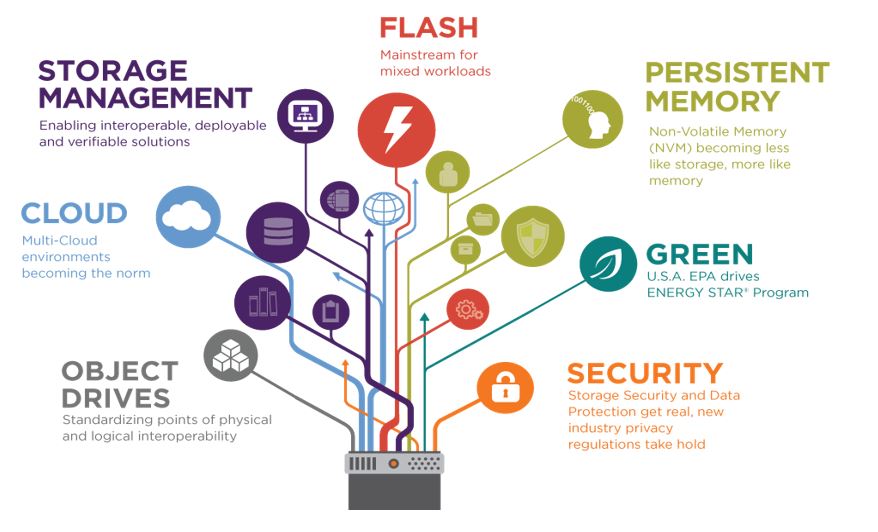

Some of the existing storage related initiatives SNIA are working on include the followings.

Hyperscaler (Public Cloud) Storage Platforms

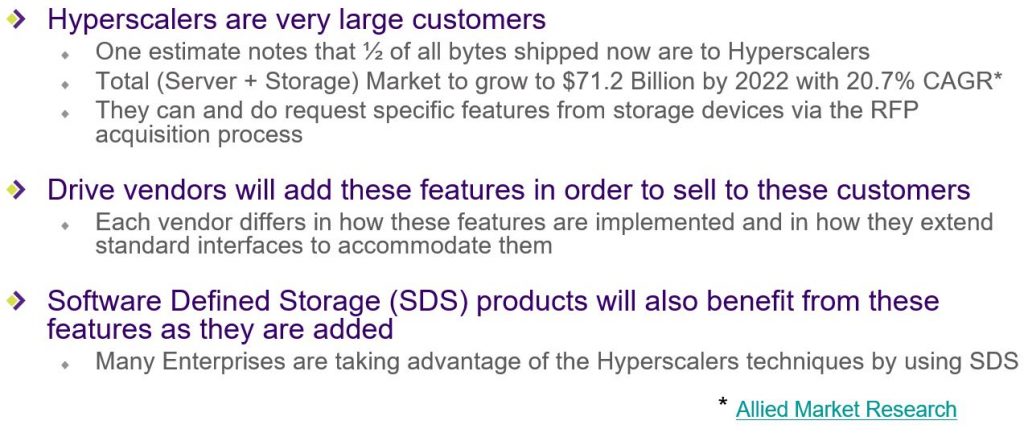

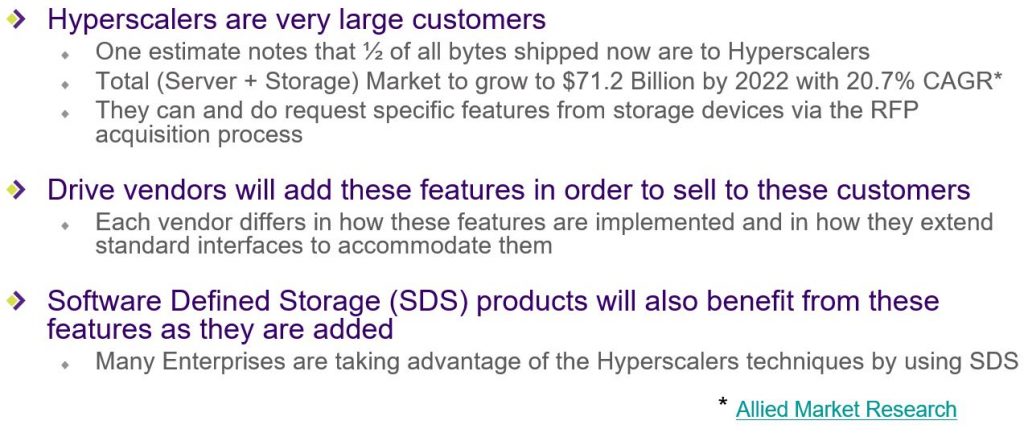

According to SNIA, Public cloud platforms, AKA Hyperscalers such as AWS, Azure, Facebook, Google, Alibaba…etc are now starting to make an impact on how disk drives are being designed and manufactured, given their large consumption of storage drives and therefore the vast buying power. In order to understand the impact of this on the rest of the storage industry, lets clarify few key basic points first on these Hyperscaler cloud platforms first (for those didn’t know)

- Public Cloud providers DO NOT buy enterprise hardware components like the average enterprise customer

- They DO NOT buy enterprise storage systems (Sales people please read “no EMC, no NetApp, No HPe 3par…etc.”)

- They DO NOT buy enterprise networking gear (Sales people please read “no Cisco switches, no Brocade switches, HPe switches…etc”.)

- They DO NOT buy enterprise servers from server manufacturers (Sales people please read “no HPe/Dell/Cisco UCS servers…etc.)

- They build most things in-house

- Often this include servers, network switches…etc

- They DO bulk buy disk drives direct from the disk manufacturers & uses home grown Software Defined Storage techniques to provision that storage.

Now if you think about it, large enterprise storage vendors like Dell and NetApp who normally bulk buy disk drives from manufacturers such as Samsung, Hitachi, Seagate…etc would have had a certain level of influence over how their drives are made given the economies of scale (bulk purchasing power) they had. However now, Public cloud providers who also bulk buy, often quantities far bigger than those said storage vendors would have, also become hugely influential over how these drives are made, to the level that their influence is exceeding that of those legacy storage vendors. This influence is growing such that they (Public Cloud providers) are now having a direct input towards the initial design of the said components (i.e disk drives…etc.) and how they are manufactured, simply due to the enormous bulk purchasing power as well as the ability they have to test drive performance at a scale that was not even possible by the drive manufacturers before, given their global data center footprint.

Expanding on the focus these guys have on Software Defined storage technologies to aggregate all these disparate disk drives found in their servers in the data center is inevitably leading to various architectural changes in how the disk drives are required to be made going forward. For example, most legacy enterprise storage arrays would rely on the old RAID technology to rebuild data during drive failures and there are various background tasks implemented in the disk drive firmware such as ECC & IO re-try operations during failures which adds to the overall latency of the drive. However with modern SDS technologies (in use within Public Cloud platforms as well as some new enterprise SDS vendors tech), there are multiple copies of data held on multiple drives automatically as a part of the Software Defined Architecture (i.e. Erasure Coding) which means those specific background tasks on disk drives such as ECC, and re-try mechanism’s are no longer required.

For example, SNIA highlighted Eric Brewer, the VP of infrastructure of Google who talked about the key metrics for a modern disk drive to be,

- IOPS

- Capacity

- Lower tail latency (long tail of latencies on a drive, arguably caused due to various background tasks, typically causes a 2-10x slower response time from a disk in a RAID group which causes a disk & SSD based RAID stripes to experience at least a single slow drive 1.5%-2.2% of the time)

- Security

- Lower TCO

So in a nutshell, Public cloud platform providers are now mandating various storage standards that disk drive manufacturers have to factor in to their drive design such that the drives are engineered from ground up to work with Software Defined architecture in use at these cloud provider platforms. What this means most native disk firmware operations are now made redundant and instead the drive manufacturer provides an API’s through which cloud platform provider’s own software logic will control those background operations themselves based on their software defined storage architecture.

Some of the key results of this approach includes following architectural designs for Public Cloud storage drives,

- Higher layer software handles data availability and is resilient to component failure so the drive firmware itself doesn’t have to.

- Custom Data Center monitoring (telemetry), and management (configuration) software monitors the hardware and software health of the storage infrastructure so the drive firmware doesn’t have to

- The Data Center monitoring software may detect these slow drives and mark them as failed (ref Microsoft Azure) to eliminate the latency issue.

- The Software Defined Storage then automatically finds new places to replicate the data and protection information that was on that drive

- Primary model has been Direct Attached Storage (DAS) with CPU (memory, I/O) sized to the servicing needs of however many drives of what type can fit in a rack’s tray (or two) – See the OCP Honey Badger

- With the advent of higher speed interfaces (PCI NVMe) SSDs are moving off of the motherboard onto an extended PCIe bus shared with multiple hosts and JBOF enclosure trays – See the OCP Lightning proposal

- Remove the drives ability to schedule advanced background operations such as Garbage collection, Scrubbing, Remapping, Cache flushes, continuous self tests…etc on its own and allow the host to affect the scheduling of these latency increasing drive maintenance operations when it sees fit – effectively remove the drive control plane and move it up to the control of the Public Cloud platform (SAS = SBC-4 background Operation Control, SATA = ACS-4 advanced background operaitons feature set, NVMe = Available through NVMe sets)

- Reduces unpredictable latency fluctuations & tail latency

The result of all these means Public Cloud platform providers such as Microsoft, Google, Amazon are now also involved at setting industry standards through organisations such as SNIA, a task previously only done by hardware manufacturers. An example is the DePop standard which is now approved at T13 which essentially defines a standard where the storage host will shrink the usable size of the drive by removing the poor performing (slow) physical elements such as drive sectors from the LBA address space rather than disk firmware. The most interesting part is that the drive manufacturers are now required to replace the drives when enough usable space has shrunk to match the capacity of a full drive, without necessarily having the old drive back (i.e. Public cloud providers only pay for usable capacity and any unusable capacity is replenished with new drives) which is a totally different operational and a commercial model to that of legacy storage vendors who consume drives from drive manufacturers.

Another concept that’s pioneered by the Public cloud providers is called Streams which maps lower level drive blocks with an upper level object such as a file that reside on it, in a way that all the blocks making the file object are stored contiguously. This simplifies the effect of a TRIM or a SCSI UNMAP command (executed when the file is deleted from the file system) which reduces delete penalty and causes lowest amount of damage to SSD drives, extending their durability.

Future proposals from Public Cloud platforms

SNIA also mentioned about future focus areas from these public cloud providers such as,

- Hyperscalers (Google, Microsoft Azure, Facebook, Amazon) are trying to get SSD vendors to expose more information about internal organization of the drives

- Goal to have 200 µs Read response and 99.9999% guarantee for NAND devices

- I/O Determinism means the host can control order of writes and reads to the device to get predictable response times –

- Window of reading – deterministic responses

- Window of writing and background – non-deterministic responses

- The birth of ODM – Original Design Manufacturers

- There is a new category of storage vendors called Original Design Manufacturer (ODM) direct who package up best in class commodity storage devices into racks according to the customer specifications and who operate at much lower margins.

- They may leverage hardware/software designs from the Open Compute Project (OCP) or a similar effort in China called Scorpio, now under an organization called the Open Data Center Committee (ODCC), as well from as other available hardware/software designs.

- SNIA also mentioned about few examples of some large global enterprise organisations such as a large bank taking the approach of using ODM’s to build a custom storage platform achieving over 50% cost savings over using traditional enterprise storage

My Thoughts

All of these Public Cloud platform introduced changes are set to collectively change the rest of the storage industry too and how they fundamentally operate which I believe would be good for the end customers. Public cloud providers are often software vendors who approaches every solution with a software centric solution and typically, would have highly cost efficient architecture of using cheapest commodity hardware with underpinned by intelligent software. This will likely re-shape the legacy storage industry too and we are already starting to see the early signs of this today through the sudden growth of enterprise focused Software Defined Storage vendors and legacy storage vendors struggling with their storage revenue. All public cloud computing and storage platforms are a continuous evolution for the cost efficiency and each of their innovation in how storage is designed, built & consumed will trickle down to the enterprise data centers in some shape or form to increase overall efficiencies which surely is only a good thing, at least in my view. And smart enterprise storage vendors that are software focused, will take note of such trends and adopt accordingly (i.e. SNIA mentioned that NetApp for example, implemented the Stream commands on the front end of their arrays to increase the life of the SSD media), where as legacy storage / hardware vendors who are effectively still hugging their tin, will likely find the future survival more than challenging.

Also, the concept of ODM’s really interest me and I can see the use of ODM’s increasing further as more and more customers will wake up to the fact that they have been overpaying for their storage for the past 10-20 years in the data center due to the historically exclusive capabilities within the storage industry. With more of a focus on a Software Defined approach, there are large cost savings to be had potentially through following the ODM approach, especially if you are an organisation of that size that would benefit from the substantial cost savings.

I would be glad to get your thoughts, through comments below

If you are interested in the full SNIA session, a recording of the video stream us available here and I’d recommend you watch it, especially if you are in the storage industry.

Thanks

Chan

P.S. Slide credit goes to SNIA and TFD