Yesterday, I had the priviledge to be invited to an exclusive VMware #vExpert only webinar oraganised by the vExpert community manager, Corey Romero and DataGravity, one of their partner ISV’s to get a closer look at the DataGravity solution and its integration with VMware. My initial impression was that its a good solution and a good match with VMware technology too and I kinda like what I saw. So decided to post a quick post about it to share what I’ve learned.

DataGravity Introduction

DataGravity (DG from now on) solution appear to be all about data managament, and in perticular its about data management in a virtualised data center. In a nutshell, DG is all about providing a simple, virtualisation friendly data management solution that, amongst many other things, focuses on the following key requirements which are of primary importance to me.

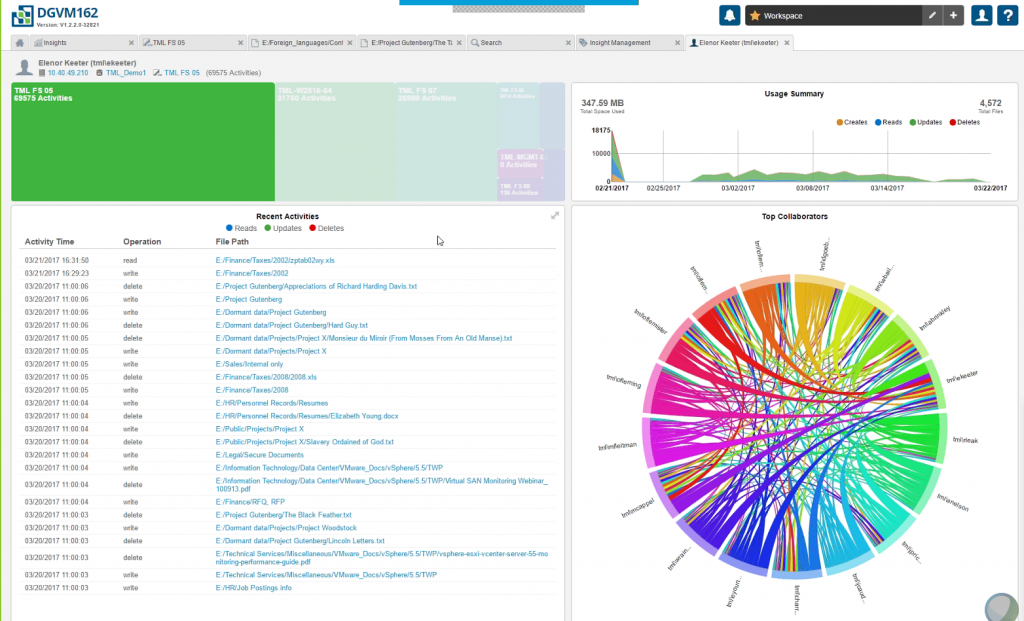

- Data awareness – Understand different types of data available within VMs, structured or unstructured along with various metadata about all data. It automatically keeps a track of data locations, status changes and various other metadata information about data including any sensitive contents (i.e. Credit card information) in the form of an easy to read, dashboard style interface

- Data protection & security – DG tracks sensitive data and provide a complete audit trail including access history helpo remediate any potential loss or compromise of data

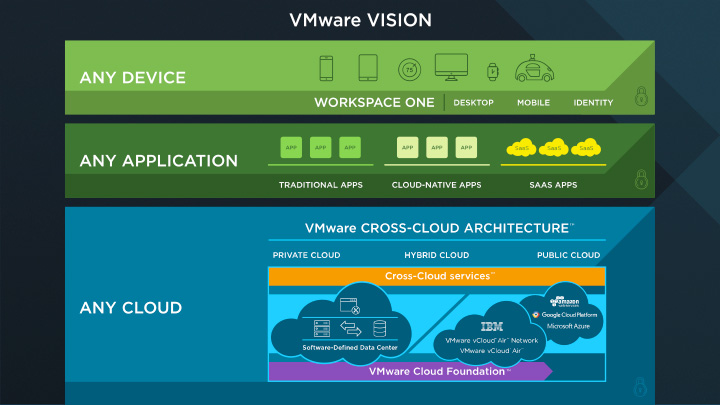

DG solution is currently specific to VMware vSphere virtual datacenter platforms only and serves 4 key use cases as shown below

Talking about the data visulation itself, DG claim to provide a 360 degree view of all the data that reside within your virtualised datacenter (on VMware vSphere) and having see the UI on the live demo, I like that visualisation of it which very much resemblbes the interface of VMware’s own vrealise operations screen.

The unified, tile based view of all the data in your datacenter with vROPS like context aware UI makes navigating through the information about data pretty self explanatory.

Some of the information that DG automatically tracks on all the data that reside on the VMware datacenter include information as shown below

Some of the cool capabilities DG has when it comes to data protection itself include behaviour based data protection where it proactively monitor user and file activities and mitigates potential attacks through sensing anomolous behaviours and taking prevenetive measures such as orchestratin protection points, alerting administrators to even blocking user access automatically.

During a recovery scenario, DG claims to assemble the forensic information needed to perform a quick recovery such as cataloging files and incremental version information, user activity information and other key important meta data such as known good state of various files which enable the recovery with few clicks.

Some Details

During the presentaiton, Dave Stevens (Technical Evangelist) took all the vExperts through the DG solution in some detail and its integration with VMware vSphere which I intend to share below for the benefit of all others (sales people: feel free to skip this section and read the next).

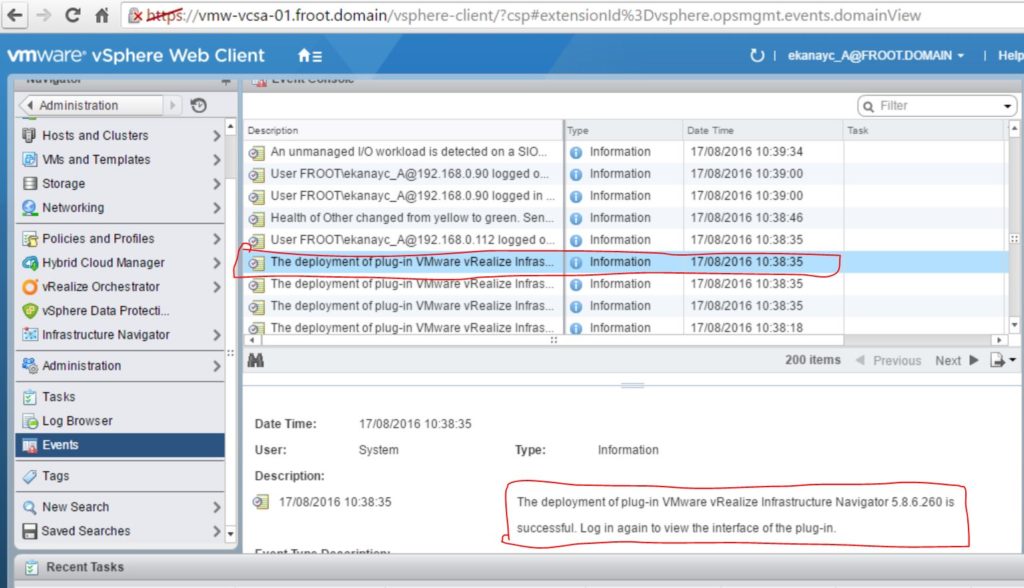

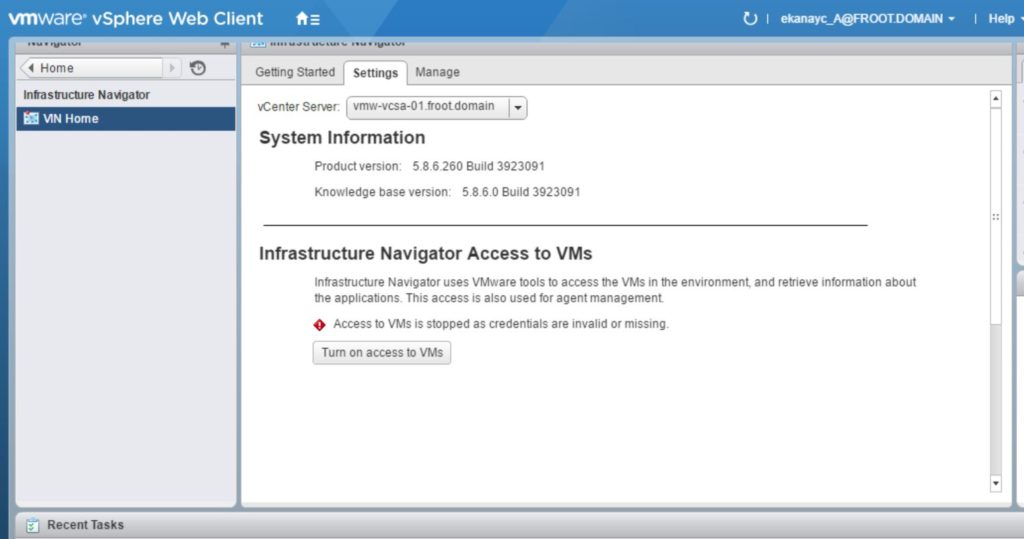

The whole DG solution is deployed as a simple OVA in to vCenter and typically requires connecting the appliance to Microsoft Active Directory (for user access tracking) initially as a one off task. It will then perform an automated initial discovery of data and the important thing to note here is that it DOES NOT use an agent in each VM but simply uses the VMware VADP, or now known as vSphere Storage API to silently interrogate data that live inside the VMs in the data center with minimal overhead. Some of the specifics around the overhead around this are as follows

- File indexing is done at a DiscoveryPoint (Snapshot) either on a schedule or user driven. (No impact to real-time there access from a performance point of view).

- Real time access tracking overhead is minimal to non existent

- Real-time user activity is 200k of memory

- Network bandwidth about 50kbps per VM.

- Less than 1% of CPU

From an integration point of view, while DG solution integrates with vSphere VM’s as above irrespective of the underlying storage platform, it also has the ability to integrate with specific storage vendors too (licensing prerequisites apply)

Once the data discovery is complete, further discoveries are done on an incremental basis and the management UI is a simple web interface which looks pretty neat.

Similar to VMware vROPS UI for example, the whole UI is context aware so depending on what object you select, you are presented with stats in the context of the selected object(s).

The usage tracking is quite granular and keeps a track of all types of user access for data in the inventory which is handy.

Searching for files is simple and you can also use tags to search using, which are simple binary expressions. Tags can be grouped together in to profiles too to search against which looks pretty simple and efficient.

I know I’ve mentioned this already but the simple, intuitive user interface makes consuming the information on the UI about all your data in singple pane of glass manner looks very attractive.

Current Limitations

There are some current limitations to be aware of however and some of the important ones include,

- Currently it doesn’t look inside structured data files (i.e. Database files for example)

- Covers about 600 various file types

- File content analytics is available for Windows VMs only at present

- Linux may follow soon?

- VMC (VMware Cloud on AWS) & VCF (Vmware Cloud Foundation) support is not there (yet)

- Is this to be annouced during a potential big event?

- No current availability on other public cloud platforms such as AWS or Azure (yet)

My Thoughts

I lilke the solution and its capabilities due to various reasons. Primarily its because the focus on data that reside in your data center is more important now that its ever been. Most organisaitons simply do not have a clue of the type of th data they hold in a datacenter, typically scattered around various server, systrems, applications etc, often duplicated and most importantly left untracked on their current relevence or even the actual usage as to who access what. Often, most data that is generated by an organisation serves its initial purpose after a certain intial period and that data is now simply just kept on the systems forever, intentionally or unintentionally. This is a costly exercise, especially on the storage front and you are typically filling your SAN storage with stale data. With a simple, yet intelligent data management solution like DG, you now have the ability to automatically track data and their ageing across the datacenter and use that awareness of your data to potentially move stale data on to a different tier, especially a cheaper tier such as a public cloud storage platform.

Furthermore, not having an understanding of data governance, especifically not monitoring the data access across the datacenter is another issue where many organisations do not collectively know what type of data is available where within the datacenter and how secure that data is including their access / usage history over their existence. Data security is probably the most important topic in the industry today as organisations are in creasingly becoming digital thanks to the Digital revelution / Digital Enterprise phoenomena (in other words, every organisation is now becoming digital) and a guranteed by product of this is more and more DATA being generated which often include all if not most of an organisations intelectual property. If theres no credible way of providing a data management solution focusing around security for such data, you are risking loosing the livelyhood of your organisation and its potential survival in a fiercely coimpetitive global economy.

It is important to note that some regulatory compliance has always enforced the use of data management & governance solutions such as DG tracking such information about data and their security for certain type of data platforms (i.e. PCI for credit card information…etc). But the issue is no such requirement existed for all types of data that lives in your datacenter. This about to change, at least here in the Europe now thanks to the European GDPR (General Data Protection Regulation) which now legally oblighes every orgnisation to be able to provide auditeble history of all types of data that they hold and most organisations I know do not have a credible solution covering the whole datacenter to meet such demands rearding their data today.

A simple, easily integrateble solution that uses little overhead like DataGravity that, for the most part harness the capabilities of the underlying infrastructure to track and manage the data that lives on it could be extremely attractive to many customers. Most customers out there today use VMware vSphere as their preferred virtualisaiton platform and the obvious integration with vSphere will likely work in favour of DG. I have already signed up for a NFR download for me to have doiwnload and deploy this software in my own lab to understand in detail how things work in detail and I will aim to publish a detailed deepdive post on that soon. But in the meantime, I’d encourage anyone that runs a VMware vSphere based datacenter that is concerned about data management & security to check the DG solution out!!

Keen to get your thoughts if you are already using this in your organisation?

Cheers

Chan

Slide credit to VMware & DataGravity!