In the previous article of this VMware NSX tutorial, we looked at the VXLAN & Logical switch deployment within NSX. Its now time to look at another key component of NSX, which is the Distributed Logical Router, also known as DLR.

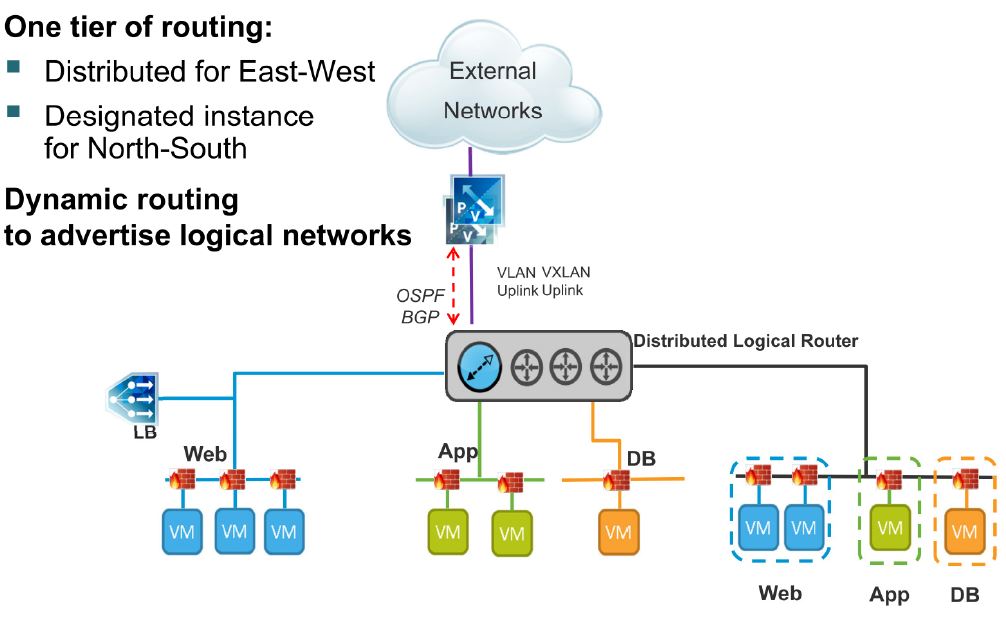

VMware NSX provide the ability to do traffic routing (between 2 different L2 segments for example) within the hypervisor without ever having to send the packet out to a physical router. (It has always been the case with traditional vSphere solutions where, for example, if the application server VM in vlan 101 need to talk to the DB server VM in vlan 102, the packet need to go out of the vlan 101 tagged port group via the uplink ports to the L3 enabled physical switch which will perform the routing and send the packet back to the vlan 102 tagged portgroup, even if both VM’s reside on the same ESXi server). This new ability to route within the hypervisor is made available as follows

- East-West routing = Using the Distributed Logical Router (DLR)

- North-South routing = NSX Edge Gateway device

This post aim to summarise the DLR architecture, key points and its deployment.

DLR Architectural Highlights

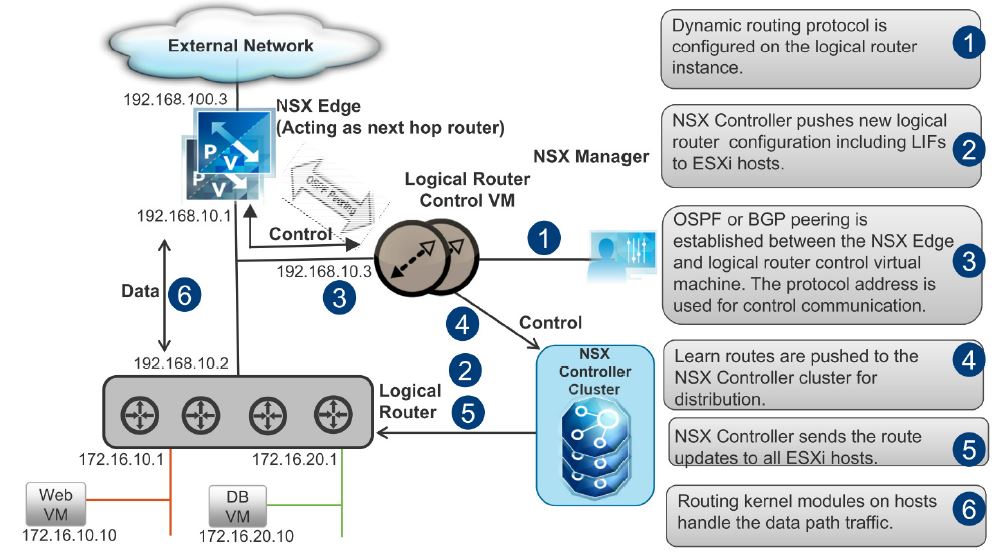

- DLR data plane capability is provided to each ESXi host during the host preparation stage, through the deployment of the DLR VIB component (as explained in a previous article of this topic)

- DLR control plane capability is provided through a dedicated control virtual machine, deployed during the DLR configuration process, by the NSX manager.

- This control VM may reside on any ESXi server host on the computer & edge cluster.

- Since the separation of data and control planes, a failure of the control VM doesn’t affect routing operations (no new routes are learnt during the unavailability however)

- Control VM doesn’t perform any routing

- Can be deployed in high availability mode (active VM and a passive control VM)

- Its main function is to establish routing protocol sessions with other routers

- Supports OSPF and BGP routing protocols for dynamic route learning (within the virtual as well as physical routing infrastructure) as well as static routes which you can manually configure.

- Management, Control and Data path communication looks like below

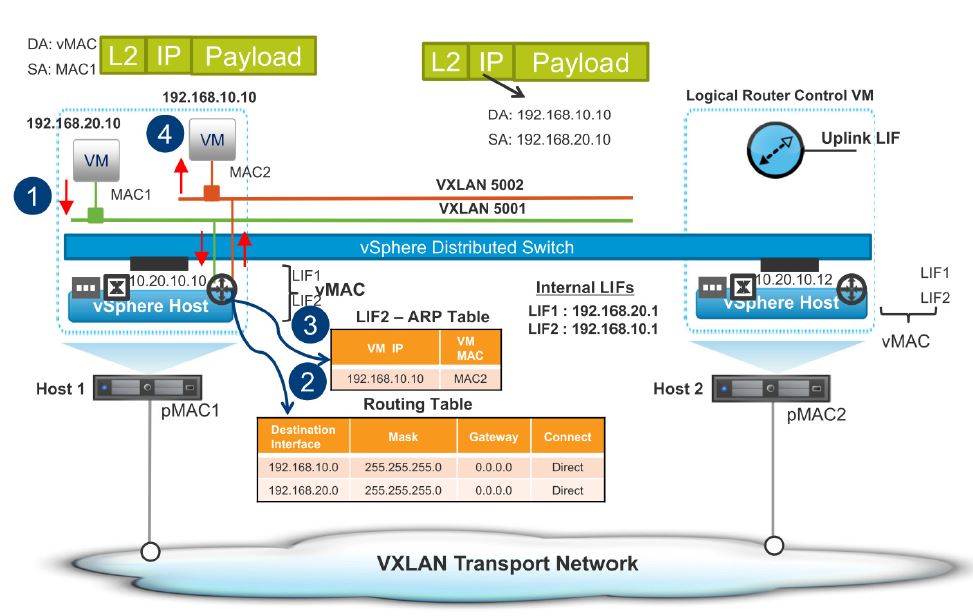

- Logical Interfaces (LIF)

- A single DLR instance can have a number of logical interfaces (LIF) similar to a physical router having multiple interfaces.

- A DLR can have up to 1000 LIFs

- A LIF connects to Logical Switches (VXLAN virtual wire) or distributed portgroups (tagged with a vlan)

- VXLAN LIF – Connected to a NSX logical switch

- A virtual MAC address (vMAC) assigned to the LIF is used by all the VMs that connect to that LIF as their default gateway MAC address, across all the hosts in the cluster.

- VLAN LIF – Connected to a distributed portgroup with one or more vlans (note that you CANNOT connect to a dvPortGroup with no vlan tag or vlan id 0)

- A physical MAC address (pMAC) assigned to an uplink through which the traffic flows to the physical network is used by the VLAN LIF.

- Each ESXi host will maintain a pMAC for the VLAN LIF at any point in time, but only one host responds to ARP requests for the VLAN LIF and this host is called the designated host

- Designated host is chosen by the NSX controller

- All incoming traffic (from the physical world) to the VLAN LIF is received by the designated instance

- All outgoing traffic from the VLAN LIF (to the physical world) is sent directly from the originating ESXi server rather than through the designated host.

- One designated instance (an ESXi server the LIF is used by all the VMs that connect to that LIF as their default gateway MAC address, across all the hosts in the cluster.

- VXLAN LIF – Connected to a NSX logical switch

- The LIF configuration is distributed to each host

- An ARP table is maintained per each LIF

- DLR can route between 2 VXLAN LIFs (web01 VM on VNI 5001 on esxi01 server talking to app01 VM on VNI 5002 on the same or different ESXi hosts) or between physical subnets / VLAN LIFs (web01 VM on VLAN 101 on esxi01 server talking to app01 VM on VLAN 102 on the same or different ESXi hosts)

- DLR traffic flows – Same host

- 1. VM1 on VXLAN 5001 attempts to communicate with VM2 on VXLAN 5002 on the same host

- 2. VM1 sends a frame with L3 IP on the payload to its default gateway. The default gateway uses the destination IP to determine that it is directly connected to that subnet.

- 3. the default gateway checks its ARP table and sees the correct MAC for that destination

- 4. VM2 is running on the same host. Default gateway passes the frame to VM2, packet never leaves the host.

- DLR traffic flow – Different hosts

- 1. VM1 on VXLAN 5001 attempts to communicate with VM2 on VXLAN 5002 on a different host. Since the VM2 is on a different host, VM1 sends the frame to the default gateway

- 2. The default gateway sends the traffic to the router and the router determines that the destination IP is on a directly connected interface

- 3. The router checks its ARP table to obtain the MAC adders of the destination VM but the MAC is not listed. The router sends the frame to the logical switch for VXLAN 5002.

- 4. The source and destination MAC addresses on the internal frame are changed. So the destination MAC is the address for the VM2 and the source MAC is the vMAC LIF for that subnet. The logical switch in the source host determines that the destination is on host 2

- 5. The logical switch puts the Ethernet frame in a VXLAN frame and sends the frame to host 2

- 6. Host 2 takes out the L2 frame, looks at the destination MAC and delivers it to the destination VM.

DLR Deployment Steps

Given below are the key deployment steps.

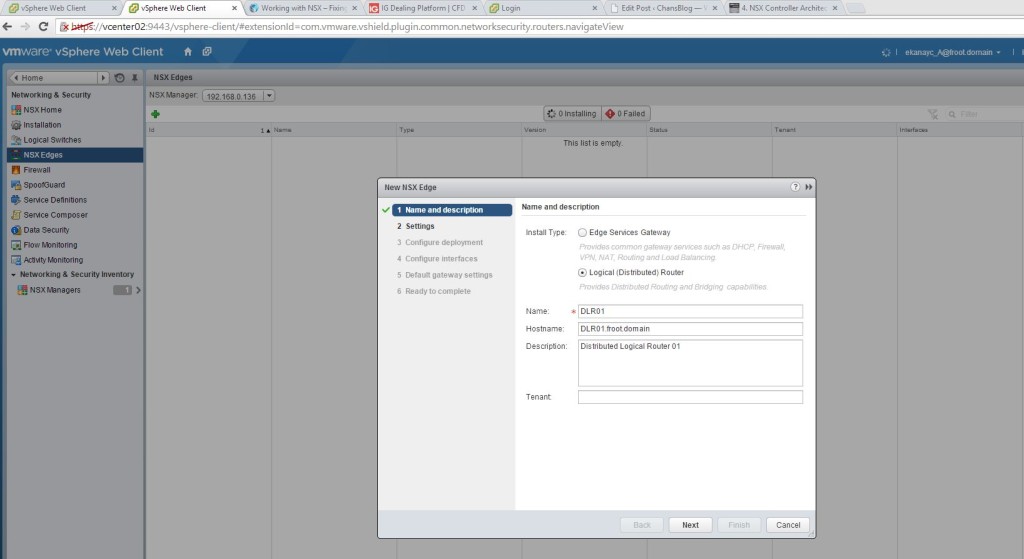

- Go to vSphere Web client -> Networking & Security and click on the NSX Edges on the left had pane. Click the plus sign at the top and select Logical Router and provide a name & click next.

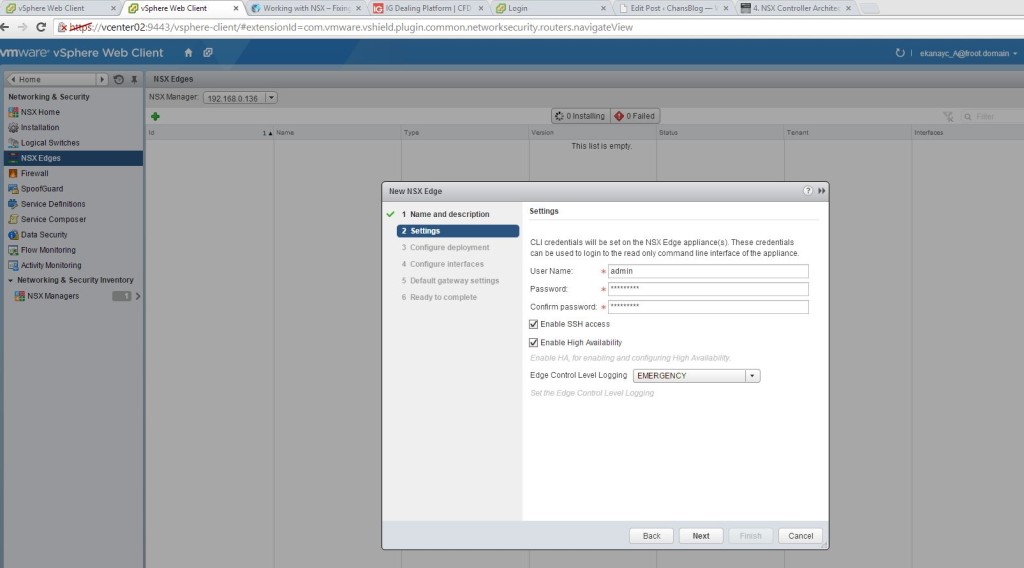

- Now provide the CLI (SSH) username and password. Note that the password here need to be of a minimum of 12 digits and must include a special character. Click on enable SSH to be able to putty on to it (note that you also need to enable the appropriate firewall rules later without which SSH wont work. Enabling HA will deploy a second VM as a standby. Click next.

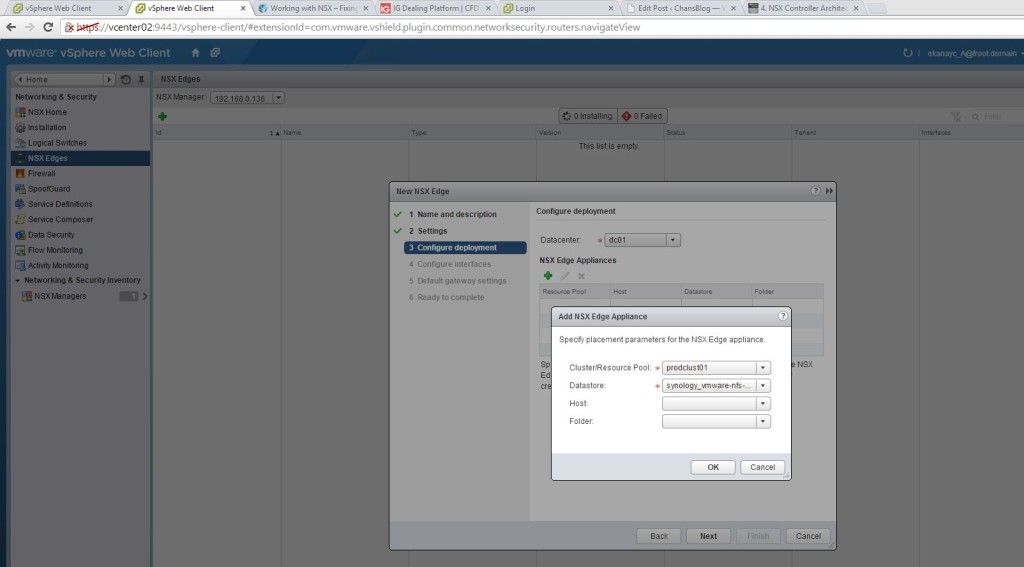

- Select the cluster location and the datastore where the NSX Edge appliances (provides the DLR capabilities) will be deployed. Note that both appliances will be deployed on the same datastore and if you require that they be deployed in different datastores for HA purposes, you’d need to svmotion one to a different datastore manually.

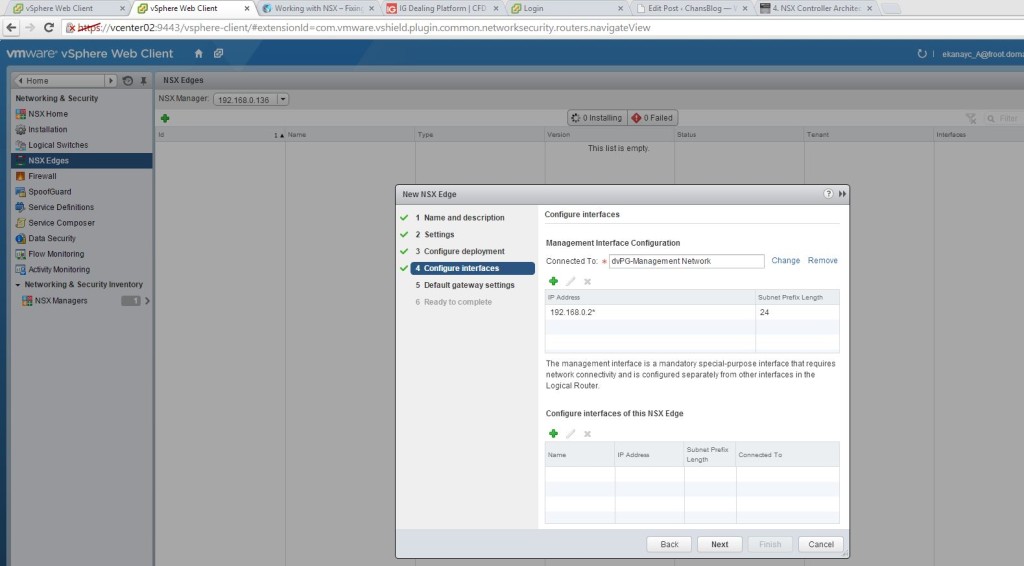

- In the next screen, you configure the interfaces (LIF – explained above). There are 2 main different types of interfaces here. Management interface is used to manage the NSX edge device (i.e. SSH on to) and is usually mapped to the management network. All other interfaces are mapped to either VXLAN networks or VLAN backed portgroups. First, we create the management interface by mapping / connecting the management interface to the management distributed port group and providing an IP address on the management subnet for this interface.

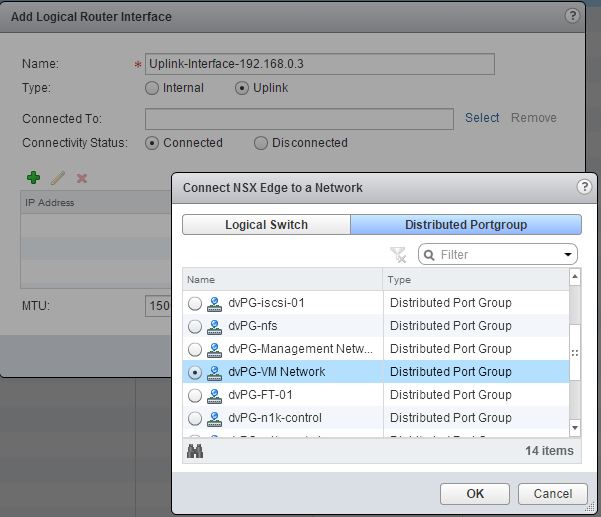

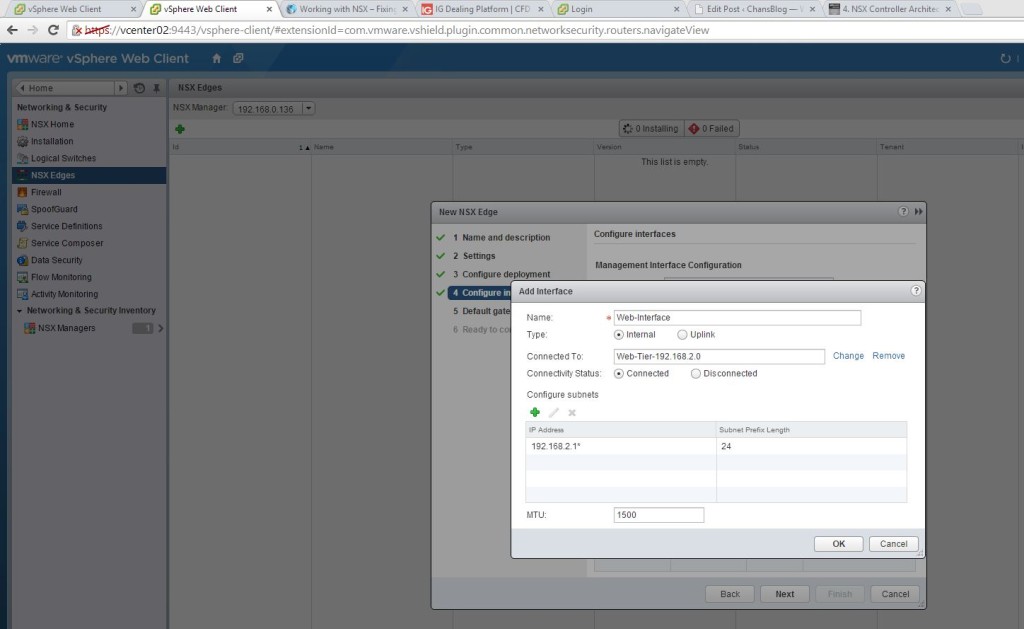

- And then, click the plus sign at the bottom to create & configure other interfaces used to connect to VXLAN networks or VLAN tagged port groups. These interfaces fall in to 2 parts. Uplink interface and internal interfaces. Uplink interface would be to connect the DLR /Edge device to an external network and often this would be connected to one of the “VM Network” portgroups to connect the internal interfaces to the outside world. Internal interfaces are typically mapped to NSX virtual wires (VXLAN networks) or a dvPortGroup. Below, we create 2 interfaces and map them to the 2 VXLAN networks called App-Tier and Web-Tier (created in a previous post of this series during the VXLAN & Logical switch deployment). For each interface you create, an interface subnet must be specified along with an ip address for the interface. Often, this would be the default gateway IP address to all the VM’s belonging to the VXLAN network / dvPortGroup mapped to that interface). Below we create 3 different interfaces

- Uplink Interface – This uplink interface would map to the “VM network” dvPortGroup and would provide external connectivity from the internal interfaces of the DLR to the outside world. It will have an IP address from the external network IP subnet and is reachable from the outside work using this IP (after the appropriate firewall rules). Note that this dvPG need to have a vlan tag other than 0 (a VLAN ID must be defined on the connected portgroup)

- We then create 2 internal interfaces, one for the Web-tier (192.168.2.0/24) and another for the App-Tier (192.168.1.0/24). The interface IP would be the default gateway for the VMs.

- Uplink Interface – This uplink interface would map to the “VM network” dvPortGroup and would provide external connectivity from the internal interfaces of the DLR to the outside world. It will have an IP address from the external network IP subnet and is reachable from the outside work using this IP (after the appropriate firewall rules). Note that this dvPG need to have a vlan tag other than 0 (a VLAN ID must be defined on the connected portgroup)

- Once all the 3 interfaces are configured, verify settings and click next.

- Next screen allows you to create a default gateway and had the Uplink interface been correctly configured, this uplink interface would need to be selected as the vNIC and gateway IP would have been the default gateway of the external network. In my example below, I’m not configuring this as I do not need my VM traffic (configured on VXLAN network) to go to outside world.

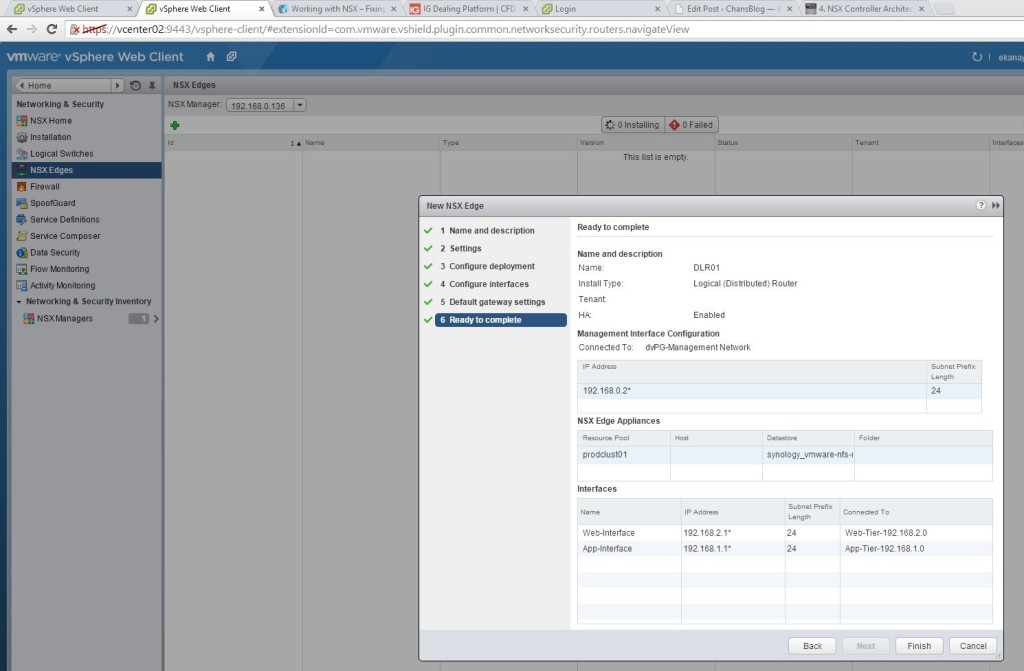

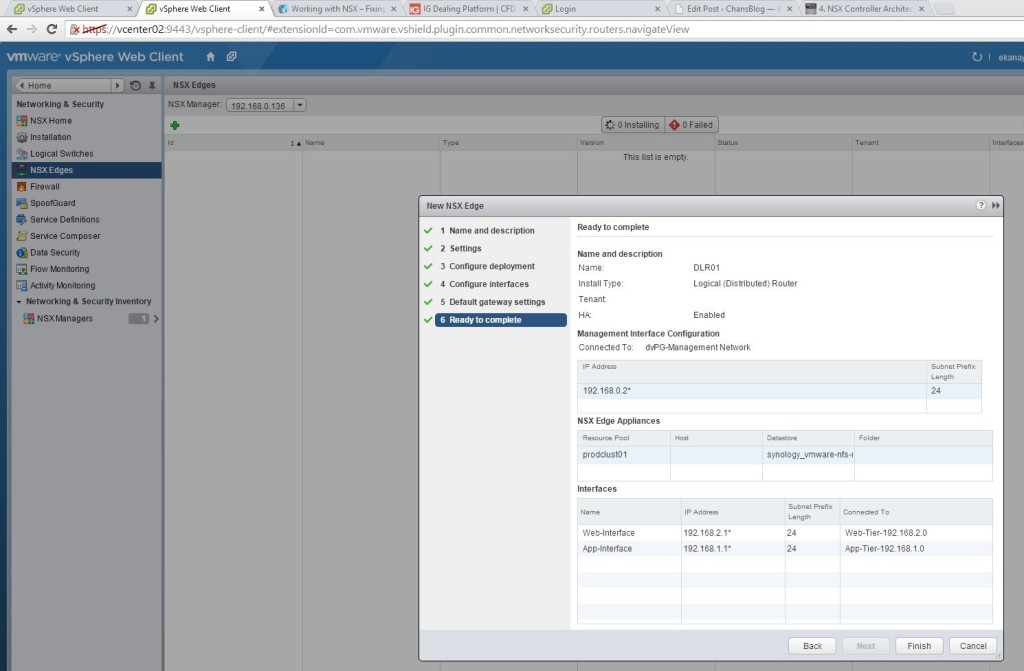

- In the final screen, review all settings and click finish for the NSX DLR (edge devices) to be deployed as appliances. These would be the control VM’s referred to earlier in the post.

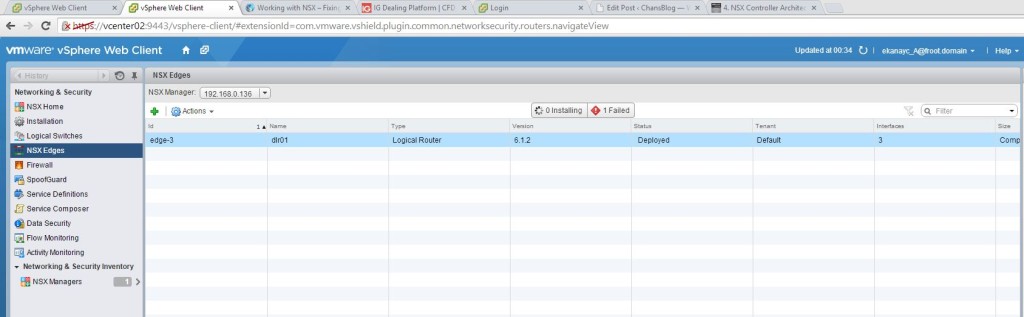

- Once the apliances have been deployed on to the vSphere cluster (compute & Edge clusters), you can see the Edge devices under the NSX Edges section as shown below

- You can double click on the edge device to go the configuration details as shown below

- You can make further configurations here including adding additional interfaces or removing existing interfaces…etc.

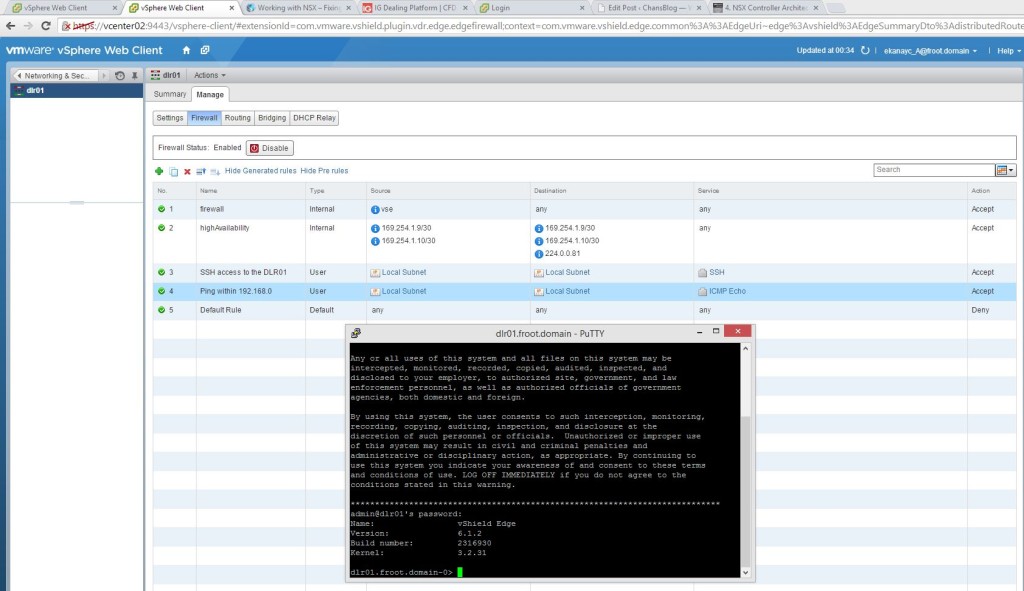

- By default, all NSX edge devices contain a built in firewall which bocks all traffic due to a global deny rule. If you need to be able to ping the management address / external uplink interface address or putty in to the management IP from the outside network, you’d need to enable the appropriate firewall rules within the firewall section of the DLR. Example rules shown below.

- That is it. You now have a DLR with 3 interfaces deployed as follows

- Uplink interface – Connected to the external network using a VLAN tagged dvPortGroup

- Web-Interface (internal) – An internal interface connected to a VXLAN network (virtual wire) where all the Web server VMs on IP subnet 192.168.2.0/24 resides. The interface IP of 192.168.2.1 is set as the default gateway for all the VMs on this network

- App-Interface (internal) – An internal interface connected to a VXLAN network (virtual wire) where all the App server VMs on IP subnet 192.168.1.0/24 resides. The interface IP of 192.168.1.1 is set as the default gateway for all the VMs on this network

- App VM’s and Web VMs could not communicate with each other before, as there was no way of being able to route between the 2 networks. Once the DLR has been deployed and connected to the interfaces as listed above, each VM can now talk to the other from the other subnet.

That’s is it and its as simple as that. Now obviously you can configure these DLR’s to have dynamic routing via OSPF or BGP…etc should you deploy these in an enterprise network with external connectivity which I’m not going to go in to but the above should give you a high level, decent understanding of how to deploy the DLR and get things going to begin with.

In the next post, we’d look at Layer 2 bridging.

Slide credit goes to VMware…!!

Cheers

Chan