I’ve been watching the ongoing issue affecting Travelex and the said Ransomware infection and thought of putting a short post outlining list of some valuable collateral that shows how customers can use Veeam data protection and management tools to help detect & prevent and recover from such attacks.

The Backdrop

Travelex which is an international Foreign exchange company is currently offline due to a Ransomware infection and are being held to ransom, to the tune of $6m. They are thought to have been infected by “Sodinokibi” Ransomware. More details can be found here. This downtime appears to be forcing Travelex offices to revert to paper based transactions but also affecting a large number of partners such as banks, retail stores …etc all of whom rely on Travelex systems for foreign currency transactions according to this article from Mirror.

The threat

Ransomware threat isn’t new, and it has had notable impact on many organisations in the past. Anyone remember when the wannacry Ransomware infected a large number of UK’s National Health Services computers resulting some significant issues when it comes to public health care?

Given the increased digitization of organisations with more and more inter-connectivity and dependence on digital information for their vital business operations, this threat is significantly higher today than it was yesterday.

Impact of a Ransomware attack can span multiple dimensions, from reputational damage to cost of downtime (lost business) to the cost of the recovery and clean up. These can rack up your total cost of damage to be millions of dollars / Pounds quite easily depending on the size of the organisation.

Typical attack vectors include direct ransomware files, infected drives and infected URL’s when visited by unsuspecting users of the organisations.

Prevention

Prevention is the best form of remediation they say. Typically when it comes to threats like Ransomware, prevention is always preferred given the complexity of remediation and recovery. Defense in depth: a layered defense system consisting of tools (Such as monitoring & Analytics tools, security solutions) and well implemented organisations policies and processes (Role based access, dual factor authentication processes, Periodic account access audits…etc) are some of the best forms preventing Ransomware infections.

Remediation

It is imperative for every organisation today to have a rock solid data management & Data protection solution in place to recover from incidents like these. Backup and Data protection is often overlooked by many as an after thought, but once confronted by a situation like the one facing Travelex today, the importance of these measures are realized quite quickly. A well thought out data protection strategy conforming to the 3-2-1 rule (3 copies of data, stored on 2 separate forms of media such as disk/object storage/tape, with 1 copy being offsite such as another DC in a different geography/Cloud/service provider) is absolutely mandatory for any organisation looking to protect their most important asset today: Their data.

Veeam & Ransomware

As a leader in Data management, both in the cloud and in the data center, Veeam has a number of tools and solutions that can help customers achieve both prevention and remediation from a Ransomware attack. Veeam One monitoring solution for example can track and alert based on unusual activities in the data center such as high CPU usage and increased data change rate (Both common sign of an active ransomware encryption in play) can help vigilent administrators notice in-flight infections and take preventive measures.

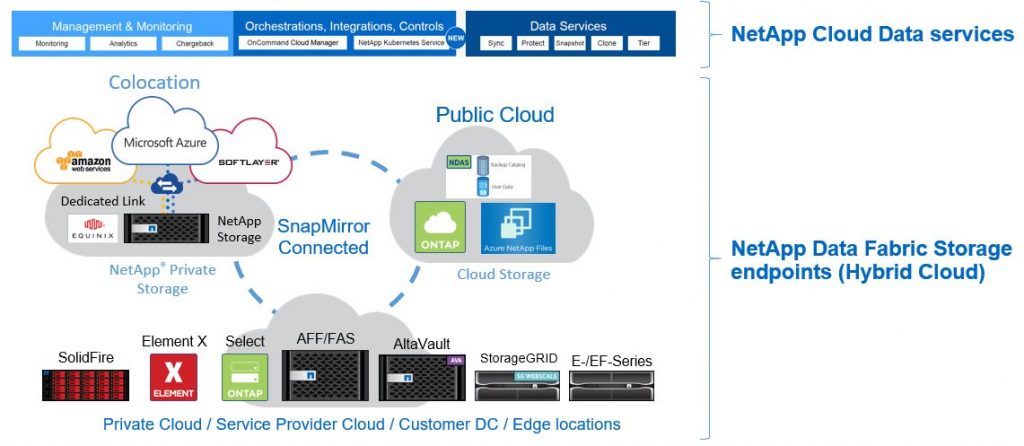

A well architected Veeam Backup and Recovery solutions that utilizes disk based snapshots from Veeam alliance partners such as NetApp, Pure Storage, HPe…etc as a part of their backup strategy can immediately revert to the immutable snapshots during recovery with near zero RTO & RPO to resume business operations from an un-infected set of data. Furthermore, Veeam Backup & Recovery can integrate with immutable object storage providers such as those leveraging S3 object lock capability (i.e. Cloudian HyperStore solution) to ensure that your backup data itself is not vulnerable to Ransomware induced malicious encryption. Some Ransomware also target specific files for encryption rather than the full disk and Veeam file level recovery can assist customers quickly restore the relevant files and resume business operations. While these are few key highlights, there are many more capabilities from the Veeam data protection suite that customers can harness to combat the Threat of Ransomware.

Below is a handy list of collateral that can be useful for many customers to understand how Veeam data management solutions can be leveraged to prevent & Recover from Ransomware infections,

- https://www.veeam.com/blog/one-ransomware-alarms.html

- https://go.veeam.com/ransomware-awareness-education

- https://www.veeam.com/wp-execbrief-conversational-ransomware-defense-survival.html

- https://www.veeam.com/blog/how-to-protect-against-ransomware-data-loss-and-encryption-trojans.html

- https://www.veeam.com/videos/recover-backup-from-ransomware-attack-9920.html

- https://www.veeam.com/wp-veeam-availability-suite-protection-against-ransomware-threats.html

And another handy post by one of the Veeam Vanguards here outlines how to benefit from Veeam’s Cloud Tier immutability (use the Chrome translator to translate from Italian to English).

Are you going to wait till something similar happens to your organisation, or would you proactively have the measures put in place now itself to handle the very real threat of Ransomware? Perhaps it’s time to include a Ransomware recovery scenario in to your annual Disaster Recovery and Business Continuity (DR & BC) test plan after all ..!